Next-Generation Chips and GPUs are reshaping computing across consumer devices, data centers, and specialized workloads, enabling smarter devices, more capable AI features, and new levels of efficiency for everyday tasks, bringing insights for developers and product teams shaping tomorrow’s devices, and ecosystems worldwide, as software developers embrace domain-specific accelerators and cross-platform optimization strategies. The rise of chiplet architectures and modular designs is redefining how silicon gets assembled and tested, allowing manufacturers to mix and match blocks like CPU cores, memory controllers, and accelerators for tailored performance, while this modularity supports faster yields and easier upgrades for gaming, AI training, and enterprise analytics, with this approach also helping manage yield variability, enabling rapid prototyping, and supporting multi-die interconnects for targeted workloads. AI accelerators embedded alongside traditional GPUs are accelerating workloads from inference to real-time analytics, while the field continues to migrate toward 3nm process nodes for improved efficiency and higher transistor density, coupled with software optimizations that reduce memory pressure, and industry benchmarks reflect stronger energy efficiency per watt and lower effective latency across diverse ML models and data pipelines. Advanced GPUs now emphasize ray tracing GPUs capabilities, delivering photorealistic lighting and scalable performance for complex scenes, virtual production, and immersive simulations across gaming, design, and research, with open standards and vendor-agnostic toolchains expanding accessibility across hardware, and this signals broader GPU adoption from cinematic rendering to industrial simulation, requiring robust SDKs, profiling tools, and cross-platform APIs. Across industries, GPU compute trends point to larger, more capable compute units and smarter interconnects, driving performance gains while managing power, thermal envelopes, and software ecosystem readiness in data centers, edge devices, and cloud platforms, as workloads scale, enterprises seek predictable performance, simplified management, and automated orchestration across hybrid compute environments.

A new era of silicon hardware is emerging, driven by modular, multi-die architectures and specialized accelerators that optimize workloads from AI to simulations. Beyond traditional graphics chips, cutting-edge design targets smaller process nodes, high-density interposers, and rapid time-to-market. Industry watchers see GPUs evolving into versatile compute engines that blend graphics, data analytics, and machine learning with advanced memory hierarchies.

Next-Generation Chips and GPUs: Embracing Chiplet Architectures for Scalable Performance

Chiplet architectures enable a modular approach to silicon, letting vendors couple multiple smaller dies into a single package. This design philosophy accelerates time to market, improves yields, and enables workload specific configurations by mixing CPU cores, memory controllers, and accelerators. For GPUs, chiplets can unlock higher shader counts and broader interconnect options, delivering scalable performance while preserving efficiency. In the era of GPU compute trends, modular design supports diverse workloads from real time graphics to AI inference without forcing a single monolithic die.

By decoupling function blocks, teams can optimize each tile for its role, such as a high bandwidth memory tile paired with AI accelerators or a ray tracing module. This arrangement also helps with supply chain resilience and design reuse across product families. Developers should expect heterogeneous memory hierarchies and APIs that expose fused hardware capabilities, enabling better utilization of the next generation of chips and GPUs.

AI Accelerators Driving Heterogeneous Compute Engines in Modern GPUs

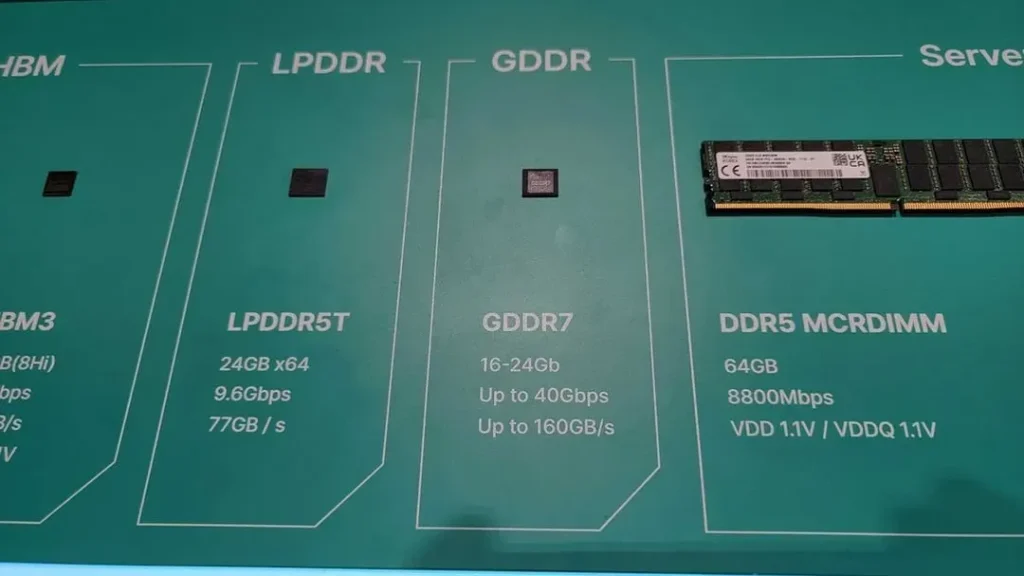

AI accelerators are becoming core to modern GPUs, offering specialized tensor cores, matrix multiply engines, and high bandwidth memory to accelerate deep learning, large language models, and real time analytics. In a world of chiplet based GPUs and evolving GPU compute trends, these accelerators enable significant throughput improvements while keeping energy efficiency in check. Integrations with traditional graphics units create heterogeneous workloads that flex to demand.

To maximize value, software teams must optimize memory scheduling and precision, mapping neural network ops to accelerators with libraries and runtimes that understand mixed precision computing. Enterprises evaluating AI workloads should measure real world latency and throughput, not just peak teraflops, and design systems that leverage accelerators alongside CPUs.

3nm Process Nodes: Performance, Power, and Manufacturing Realities

The push to 3nm process nodes promises dramatic improvements in transistor density and performance per watt, potentially enabling more capable GPUs in the same power envelope. However, 3nm brings manufacturing challenges, tighter yields, and increased design complexity that require new EDA flows and verification strategies. The industry responds with innovations like gate all around transistors and advanced lithography to close the gap between theoretical gains and real world gains.

For GPU designers, the 3nm transition means balancing thermal design, supply chain stability, and architectural innovations to deliver higher clock rates and larger compute pipelines without sacrificing reliability. As a result, chips targeting 3nm must harmonize manufacturing realities with software optimizations, memory bandwidth, and interconnect efficiency to realize the promised performance per watt improvements.

Ray Tracing GPUs: Real-Time Rendering and Immersive Graphics

Ray tracing GPUs are redefining real time graphics by accelerating photorealistic lighting, reflections, and global illumination. The next generation prioritizes ray tracing acceleration, wider memory bandwidth, and smarter work scheduling to keep frame times tight under complex scenes. Practically, this translates to more immersive games, richer visualization, and on device rendering capabilities for AR/VR and mobile workloads that previously required offline rendering.

Developers need to update rendering pipelines, prune shader workloads, and adopt SDKs that expose ray tracing capabilities across a wider set of hardware. The shift also encourages more scalable workflows such as hybrid rendering and denoising techniques, to maintain fidelity without sacrificing performance on GPUs with ray tracing cores and tensor cores for AI accelerated denoising.

GPU Compute Trends: Expanding Beyond Graphics to AI Inference and HPC

GPU compute trends show GPUs evolving from pure graphics engines into versatile general purpose compute platforms. Larger, more capable compute units, faster interconnects, and smarter memory hierarchies reduce data movement bottlenecks for HPC, analytics, and AI inference. This data centric shift rewards software that emphasizes data locality, parallelization, and efficient memory footprints to exploit modern GPUs.

Beyond graphics, developers can harness GPUs for simulations, training, and large scale inference pipelines, taking advantage of higher memory bandwidth and optimized tensor operations. As AI workloads continue to grow, optimizing for mixed workloads and scheduling across accelerators becomes essential to achieving sustained throughput in production environments.

Energy Efficiency and Thermal Design: Sustainable Compute for Edge to Data Center

Energy efficiency remains a core governing constraint as compute scales. Architectural decisions such as smarter caches, power gating, and improved branch prediction combined with the advantages of advanced process nodes help achieve more performance per watt. Thermal design power (TDP) considerations shape device form factors, cooling strategies, and system level layouts across laptops, data centers, and edge devices.

For data centers and edge deployments, sustainable computing means reducing total cost of ownership while maintaining peak performance for AI training, inference, and large scale simulations. Efficient GPUs with optimized power envelopes enable longer run times, cooler operation, and more predictable maintenance cycles, aligning hardware advances with software efficiency and environmentally conscious design goals.

Frequently Asked Questions

What are chiplet architectures and how do they impact Next-Generation Chips and GPUs?

Chiplet architectures use modular dies stitched together to form a single chip. For Next-Generation Chips and GPUs, this design improves yields, reduces time-to-market, and enables flexible interconnects and mixed accelerators. In GPUs, chiplets support higher shader counts, better memory bandwidth, and configurable hardware blocks tailored to diverse workloads.

How do AI accelerators in Next-Generation Chips and GPUs boost AI workloads?

AI accelerators, including tensor cores and optimized matrix-multiply engines, are integrated with high-bandwidth memory in Next-Generation Chips and GPUs. They deliver higher real-world throughput for deep learning, inference, and real-time analytics within heterogeneous architectures. Key factors include memory scheduling, precision choices, and software libraries that map neural networks efficiently.

What role do 3nm process nodes play in the performance and efficiency of Next-Generation GPUs?

3nm process nodes offer higher transistor density and better performance-per-watt, enabling more capable and energy-efficient GPUs. They bring manufacturing and design challenges, such as tighter design rules and advanced lithography, requiring new EDA flows and thermal management strategies to realize the expected gains in Next-Generation Chips and GPUs.

Why are ray tracing GPUs important in real-time graphics within Next-Generation GPUs?

Ray tracing GPUs provide photorealistic lighting and reflections in real-time, transforming gaming, visualization, and AR/VR workloads. The next generation emphasizes ray tracing acceleration, higher memory bandwidth, and smarter scheduling to stabilize frame times. Developers should update rendering pipelines and leverage APIs and SDKs that expose ray tracing across hardware.

What are current GPU compute trends affecting data centers and HPC in Next-Generation Chips and GPUs?

GPU compute trends show GPUs expanding from graphics into general-purpose compute for HPC, data analytics, and simulations. Expect larger compute units, faster interconnects, and smarter memory hierarchies that reduce data movement. Software should optimize memory locality and parallelism to boost AI inference, simulations, and real-time analytics on Next-Generation Chips and GPUs.

How should teams prepare software and ecosystem readiness for Next-Generation Chips and GPUs?

Teams should prepare with updated compilers, libraries, and runtimes that map workloads to new accelerators. Stay current with CUDA, ROCm, or SYCL, and optimize for mixed precision and memory locality. Plan for heterogeneity in chiplet-based designs and ensure deployment pipelines support diverse devices to maximize performance on Next-Generation Chips and GPUs.

| Topic | Key Points | Impact |

|---|---|---|

| Chiplet Architectures and Modular Design | Moves from monolithic dies to chiplets; enables faster time-to-market, better yields, and the ability to mix and match CPU cores, memory controllers, and accelerators for a given workload; GPUs benefit from higher interconnect flexibility and yields to support scalable shader counts and bandwidth. | Supports scalable, configurable configurations and efficient hardware utilization. |

| AI Accelerators and Dedicated ML Hardware | Embedded tensor cores, optimized matrix-multiply engines, and high-bandwidth memory accelerate AI workloads; heterogeneous architectures pair AI blocks with traditional graphics/compute units; software libraries and memory scheduling/precision choices are key. | Improved real-world AI throughput, energy efficiency, and latency; requires software optimization for accelerators. |

| 3nm and Beyond: Process Nodes | Smaller nodes boost performance-per-watt and transistor density but introduce manufacturing challenges, tighter design rules, and new EDA/workflow needs (gate-all-around transistors, lithography, design verification); thermal and supply-chain considerations become critical. | Enables higher efficiency and density with caveats for production and design complexity. |

| Ray Tracing GPUs and Real-Time Graphics | Real-time ray tracing, higher memory bandwidth, and smarter scheduling extend photorealistic rendering to games, visualization, and AR/VR; updated pipelines and SDKs are required to expose capabilities across hardware. | Delivers more immersive visuals and broader applicability of ray tracing across workloads. |

| GPU Compute Trends: General-Purpose Compute | GPUs grow in compute units, interconnects, and memory hierarchies; data-centric architectures favor locality, parallelization, and reduced data movement for HPC, analytics, and simulations; software must optimize workloads for memory patterns and mixed-precision compute. | Unlocks higher throughput for AI inference, simulations, and real-time analytics; emphasizes software optimization. |

| Energy Efficiency and Thermal Design | Architectural efficiency improvements and advanced process nodes boost performance-per-watt; TDP, cooling, and form factors influence device design; edge devices benefit from longer battery life while data centers gain lower operating costs and climate impact. | Lower operating costs and capability for compact, cooler devices; supports scalable deployments. |

| Software and Ecosystem Readiness | Updated compilers, libraries, and runtimes (e.g., CUDA, ROCm, SYCL) map workloads to accelerators; handling device heterogeneity and memory locality is essential; ecosystem tools enable performance optimization across chiplet designs. | Accelerates realization of hardware potential and enables broader adoption. |

| Industry Implications: From Consumer GPUs to Data Centers | Consumer GPUs, data-center AI inference, research computing, and analytics pipelines are shaped by accelerator-rich architectures; hybrid cloud and on-demand provisioning require software orchestration across CPUs/GPUs; TCO and upgrade planning are critical. | Informed strategic planning and long-term investment across products and platforms. |

| Security and Reliability Considerations | Hardware-level security features (secure boot, memory protection, enclaves), fault tolerance, and secure software updates are essential for protecting data and workloads on complex compute platforms. | Supports trusted, stable platforms for mission-critical applications. |

Summary

Next-Generation Chips and GPUs are redefining how we think about computing across consumer devices, data centers, and specialized workloads. This evolution is driven by chiplet modularity, AI accelerators, and advancing process nodes, complemented by real-time ray tracing and expanded GPU compute capabilities. A focus on energy efficiency, robust software ecosystems, and security ensures these advances translate into practical, scalable deployments. To succeed, organizations should embrace heterogeneous compute, optimize software for data locality and mixed precision, and align hardware, software, and supply chains to maximize the impact of Next-Generation Chips and GPUs.